Demystifying A.I. is urgent, but not hard

Neural Networks are not magical, but we're way deeper into it than you realized

What’s this about?

Fears and hypes about A.I. are everywhere, leading to increasingly wild speculation about what’s happening in the world of software

Conspiracy theories range from high-power military databases crunching a whole world of information (my default) to machines being demonically possessed and coming to life with the agenda (what I’m trying to steer people away from)

I recently wrote my uneducated guess on this substack account and received some interesting feedback from a programmer friend of mine, who shared useful links

How computers “see”

Despite becoming familiar with daily usage of computers, video games, smartphones, and apps, the average person is still clueless about coding, software engineering and the mathematical logic behind everything machines do. This is where real nerds can try to step up and explain it to us. Thankfully there’s at least one very smart guy who is gifted with this ability (20 min):

If you watch the video above, you’ll see that most of what these Neural Networks are doing is a matter of inputting digitized data (whatever is relevant), letting the computer break it down bit-by-bit using algebraic formulas, and then remembering and associating certain patterns that emerge. Why? Depends what you want to do:

Recognize a face for a biometric tracking system

Recognize a tree for self-driving vehicles to avoid

Recognize a weed for an AI-driven crop-sprayer to hit with pinpoint accuracy

Recognize an enemy of the state for a killer android to hit with pinpoint accuracy

Or, in the case of tools that let you create deepfakes of voices, music, video, or images…

Output a generated pattern similar enough to what was input that it is recognizable by its own standards

The barcode analogy

The easiest way to understand this is to think of a classic barcode scanner, used in thousands of supermarkets at checkout for decades. Barcodes are sequence of straight vertical lines, generated by a computer, for a computer. They always correlate directly with a series of numbers, which could just as easily be printed as numbers for human eyes (and in fact, they are). Already, by the very nature of this simple pattern being called a barcode, we remember that computers must be programmed to encrypt and decrypt information in order to turn one kind of data (a series of lines) thing into another (a series of numbers).

The next evolution was QR (Quick Response) codes, which are also called “matrix barcodes”.1 Unlike old fashioned barcodes, these require digital cameras to find the exact dimensions of the code panel (using the three squares in the corners to recognize the 3D angle and distance of the code), and then break down what's inside of the panel using the black and white pattern instead of straight lines.

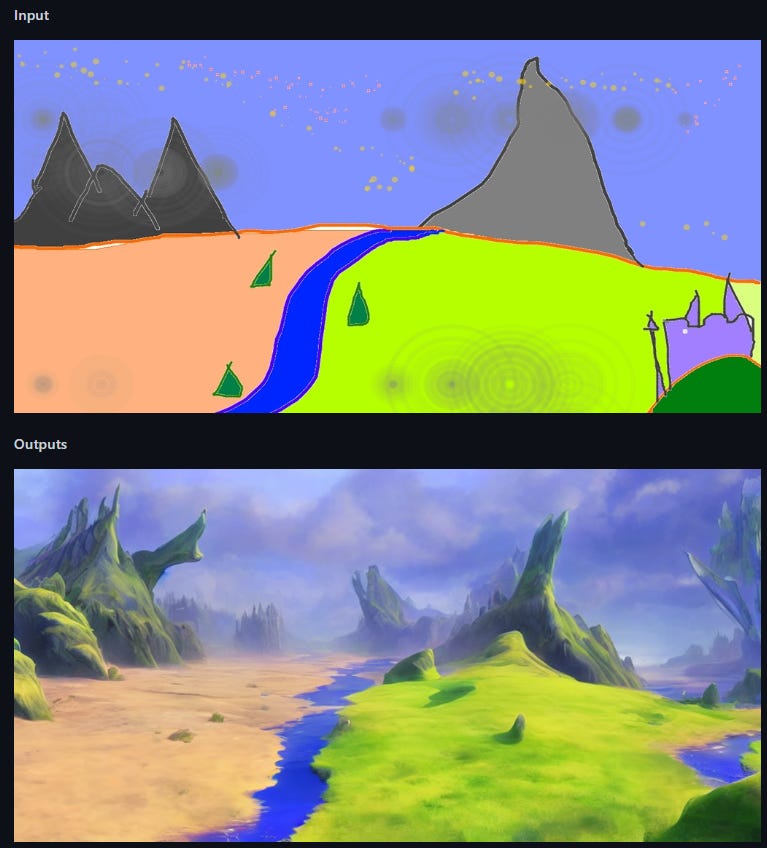

Neural Networks are essentially doing the same thing, but they have been trained (and the video explains HOW this happens) to recognize the outlines of everything they see, break it down into elements, and remember them for later. When a computer generates images in accordance with keywords or correlated images,2 think of a computer generating a QR code.

Software can therefore “see” images much like our own eyes do, only they do it pixel-by-pixel with binary code: by recognizing the contours of forms by the changes in lighting values, and whether these are smooth and long, short and sudden, or jagged and noisy. We see that a round thing is a “ball” instead of a “circle” by the fact that it has a smooth gradient correlating with the lighting source. Optical illusions easily prove that people will mistake a flat circle for a ball when it has the right gradient pattern printed on it, especially if it’s designed to match the lighting of the room.

What does this really mean?

It means we’ve have been living in the age of “AI” for much longer than we realized.

Everything we’ve been participating in online for two decades has been building up the “Neural Network” of Google especially, and, by extension, the intelligence agencies that they work for. They have a big hand in guiding the research and development of mass-market solutions for the purpose of steering society into a collective data farm.

You can see Neural Networks and “AI” all over the place once you know what to look for, even if you don’t tend to use them. Just look at Google Lens which instantly detects, encrypts, and converts visual data from your phone’s camera into something the software can put to use in various ways. I’ll be pulling the examples directly from Google’s own promotional website:

“Search what you see” — Recognize and look up specific objects online without needing words, including products, toys, and clothing

“Copy and translate text” — Recognize and automatically translate text in 3D space using an Augmented Reality, in real time

“Step by step homework help” — Recognize equations and formulas and search them online for help with homework

“Identify plants and animals” — Recognize and pinpoint flora and fauna instantly with your camera, just by showing Google what you see

We’ve already fed Big (Military) Tech everything “easy” to produce. Smartphones and Web 2.0 have been a giant social experiment to collect audio-visual data, not to target ads to people on behalf of advertisers, but for the hardcore endgame military applications that are going to roll out in next generation control systems.

They’ve hit a limit. Once you realize how far things have already gotten in the world of AI recognition software, you realize that every major technological trend has served a double purpose of digitizing the physical world and allowing the Neural Networks to digest it. But now they’re promoting Neural Networks openly, drawing attention to it so that people will engage and buzz about it. Why? There’s something they need that they can’t get from passive engagement.

StableDiffusion case study + languages

Back to the art generation question, my programmer friend pointed me toward an open source, small-scale app developed by two individuals (Robin Rombach and Patrick Esser), capable of running on a household computer, called StableDiffusion.

If you think about it, computers have always dealt with resolutions and visual “noise” in images. Anyone who has ever saved something as a JPEG or rendered a video knows how a computer has options for various qualities, squashing and compressing the image so that it takes up less space and looks uglier. Now think about a computer trying to reverse and upscale images, rather than compressing them. How hard would it be for a computer to learn that using Neural Networks? To take a noisy, small image and blowing it up to make it crisp, clean, and with proper edges?

Apparently it would take over a hundred thousand dollars.

The AI world is buzzing with the power of large generative neural networks such as ChatGPT, Stable Diffusion, and more. These models are capable of impressive performance on a wide range of tasks, but due to their size and complexity, only a handful of organizations have the ability to train them. As a consequence, access to these models can be restricted by the organization that owns them, and users have no control over the data the model has seen during training.

That’s how we can help: at MosaicML, we make it easier to train large models efficiently, enabling more organizations to train their own models on their own data.

Neural Networks can be trained to guess what the “upscaled” image should look like, and these models are trained with tagged images in massive databases. So in that sense, I was right about the need for massive tagged libraries, but just not at the correct point in the process. StableDiffusion used a dataset called “LAION-5B” for their training purposes. Here’s what they say about themselves:

We present a dataset of 5.85 billion CLIP-filtered image-text pairs … 2.3B contain English language, 2.2B samples from 100+ other languages and 1B samples have texts that do not allow a certain language assignment (e.g. names). Additionally, we provide several nearest-neighbor indices, an improved web interface for exploration & subset creation as well as detection scores for watermark and NSFW.

So you see, the “image-text pairs” must be accurate not only in English, but in every language that hopes to use these tools. That’s why Saudi Arabia, China, and other countries need to work overtime to catch up with Google (which they also use) and create their own datasets. And the NSFW detection is built right into this dataset, meaning it can also intelligently filter out obscene results.

Funny thoughts

“Training” computers using “custom models” was a huge talking point of the A.I. Summit in Saudi Arabia last year, and now I get a better sense of what they meant. Controlling the rights to vast, organized, high quality datasets is necessary for training a machine how to “see” clearly and not get confused. You’ll want to check out my post on that:

Here’s a quote from my own post on how AI can be broken using invisible (to the human eye) “disruption patterns” encrypted into the image:

In other words, AI is “fragile” and can be broken easily by those who know how to interfere with it. In the image above, a flagpole is recognized by the AI normally, but with an almost-invisible noise pattern overlaid it thinks that it’s a Labrador. The same goes for a hot air balloon, and a threshing machine. But the same “perturbation image” convinced the AI that a joystick was a Chihuahua.

Lastly, a reminder that AI is not actually “intelligent”. Here is an image generated by the world’s most advanced AI with the prompt “portrait of young Tobey Maguire crying”:

Next time I plan to explain more of my own personal tests with AI image generators and why I still think there’s more than Neural Network recognition happening.

No, not The Matrix, just the idea of a grid of numbers.

Remember Google Image Search, which has long had the ability to upload photos and search for “similar results”. Obviously computers can match similar things, and we’ve all been helping Google make those matches by using their tools.

I regularly use Google Lens and I suppose it is pretty impressive at recognizing stuff.

I've never really looked into the companies that offer to do the training, maybe there's something going on there.

One annoying application of this tech is more sophisticated bots on the internet writing generated articles, messages, comments, etc and thereby making it harder to find real humans online.

AI still hasn't been able to generate valid software for any meaningful purpose. That would require the AI to vaguely understand the problem, translate it into procedural steps, then iterate over those steps rewriting parts that don't work.

So as long as it can't generate real low level code (machine code or assembly, not some highly abstracted API), the technology has reached it's limit.

In fact, the mathematics to do this stuff has been around for a while, but the only reason it's taking off now is because processors have gotten exponentially faster. (the rate of speed increases has been slowing down as well, so unless a new computer architecture is introduced or quantum computing works, that's basically the end of it.)

What do you guys think about Neural Networks? Are they really going to change the world, or have they pretty much hit their limit? At this point I think it's all about the APPLICATIONS and machines they'll be hooking the AI up to